Advances in deep learning applied to mineral resource estimation demonstrate that convolutional neural networks can detect complex nonlinear multivariate geochemical patterns that materially improve grade prediction in structurally complex ore systems. Empirical results from dense production environments show that hybrid ensembles combining deep learning and geostatistical kriging outperform either method independently, improving both precision and recall while reducing false positive and false negative rates.

Advances in deep learning applied to mineral resource estimation demonstrate that convolutional neural networks can detect complex nonlinear multivariate geochemical patterns that materially improve grade prediction in structurally complex ore systems. Empirical results from dense production environments show that hybrid ensembles combining deep learning and geostatistical kriging outperform either method independently, improving both precision and recall while reducing false positive and false negative rates. This paper reframes these results within formal statistical learning theory and extends their implications beyond geology. Deep learning in mineral systems is not merely interpolation. It represents probabilistic inference over latent geological processes. When interpreted through empirical risk minimization, posterior updating, regularization, and generalization theory, these methods become mechanisms for compressing uncertainty. That compression has direct consequences for capital allocation, exploration financing, and dynamic sequencing of real asset development under nonstationary conditions.

Mineral resource estimation has historically relied on spatial interpolation methods grounded in geostatistics, most prominently ordinary kriging. Kriging estimates block grades as weighted linear combinations of neighboring assay values under assumptions of stationarity and modeled covariance structure. In deposits characterized by moderate spatial continuity, this approach is robust. However, in narrow vein orogenic systems with heteroscedastic sampling, extreme grade variability, and complex structural controls, kriging faces structural limitations. Linear covariance models cannot easily encode nonlinear multivariate relationships between geochemical elements, structural orientation, and alteration assemblages. Deep learning architectures, particularly convolutional neural networks, provide an alternative hypothesis class capable of approximating highly nonlinear conditional mappings between input feature spaces and target grade distributions. Rather than imposing a parametric variogram structure, convolutional networks learn hierarchical spatial filters that approximate latent geological relationships embedded within high dimensional assay data.

The significance of this shift is not computational novelty. It is inferential depth. The core question becomes how learning systems approximate the conditional density p(Y | X), where Y represents block grade and X represents multivariate geochemical and spatial features. The modeling objective is to minimize expected loss over the true but unknown data generating distribution. Deep learning modifies the bias variance structure of this estimation problem by expanding hypothesis class capacity while controlling generalization through regularization mechanisms such as dropout and cross validation.

In formal terms, resource modeling under deep learning can be framed as empirical risk minimization. Given training data sampled from distribution D, a model fθ is selected from hypothesis space H to minimize empirical loss L over observed samples. The objective is not to perfectly interpolate training data but to minimize expected out of sample error under the true distribution. Model capacity introduces a bias variance tradeoff. A hypothesis class that is too restrictive underfits, failing to capture geological complexity. A hypothesis class that is excessively flexible risks overfitting, memorizing noise inherent in sparse or biased sampling. Dropout regularization, stochastic data removal, and hold out validation mitigate variance by preventing the model from becoming co dependent on specific spatial configurations. These mechanisms force the model to reconstruct underlying geological trends rather than memorize individual high grade assays.

Deep learning differs from kriging not merely in nonlinearity but in representational structure. Convolutional filters operate over localized spatial neighborhoods, extracting hierarchical features that encode multielement substitution patterns, structural anisotropy, and alteration signatures. Nonlinear activation functions allow these features to interact multiplicatively rather than linearly, capturing relationships that classical covariance matrices cannot represent.Crucially, multivariate nonlinear correlations between elements such as arsenic, tellurium, vanadium, copper, and sulfur can improve grade prediction even when linear correlation coefficients appear weak. From a statistical perspective, these elements function as latent proxies for mineralogical processes. Their predictive power arises from conditional structure embedded in the joint distribution rather than from simple pairwise covariance.

The strongest empirical results do not arise from deep learning alone. Hybrid ensembles combining kriging and neural network outputs outperform either approach independently. This result is theoretically coherent. Kriging incorporates geological domain constraints and human structural interpretation. Neural networks capture nonlinear latent interactions across multivariate assay space. Ensembling averages prediction errors across models, reducing variance and stabilizing inference. In ensemble theory, combining models with partially uncorrelated error structures reduces overall expected loss. If kriging errors arise primarily from linear covariance limitations and neural network errors arise from overfitting risk or sparse data extrapolation, their weighted combination can produce a lower aggregate error surface. This demonstrates that the choice between geostatistics and machine learning is not binary. It lies on a continuum of hypothesis class complexity and structural constraint. Precision and recall metrics further illustrate the bias variance interplay. Optimizing precision reduces false positives, improving mine planning confidence. Optimizing recall reduces false negatives, increasing detection of previously missed mineralisation. These objectives correspond to asymmetric loss functions in classification theory. The optimal operating point depends on capital sensitivity to missed ore versus waste misclassification. Thus resource modeling becomes a formal expected value optimization under asymmetric loss rather than a purely geological exercise.

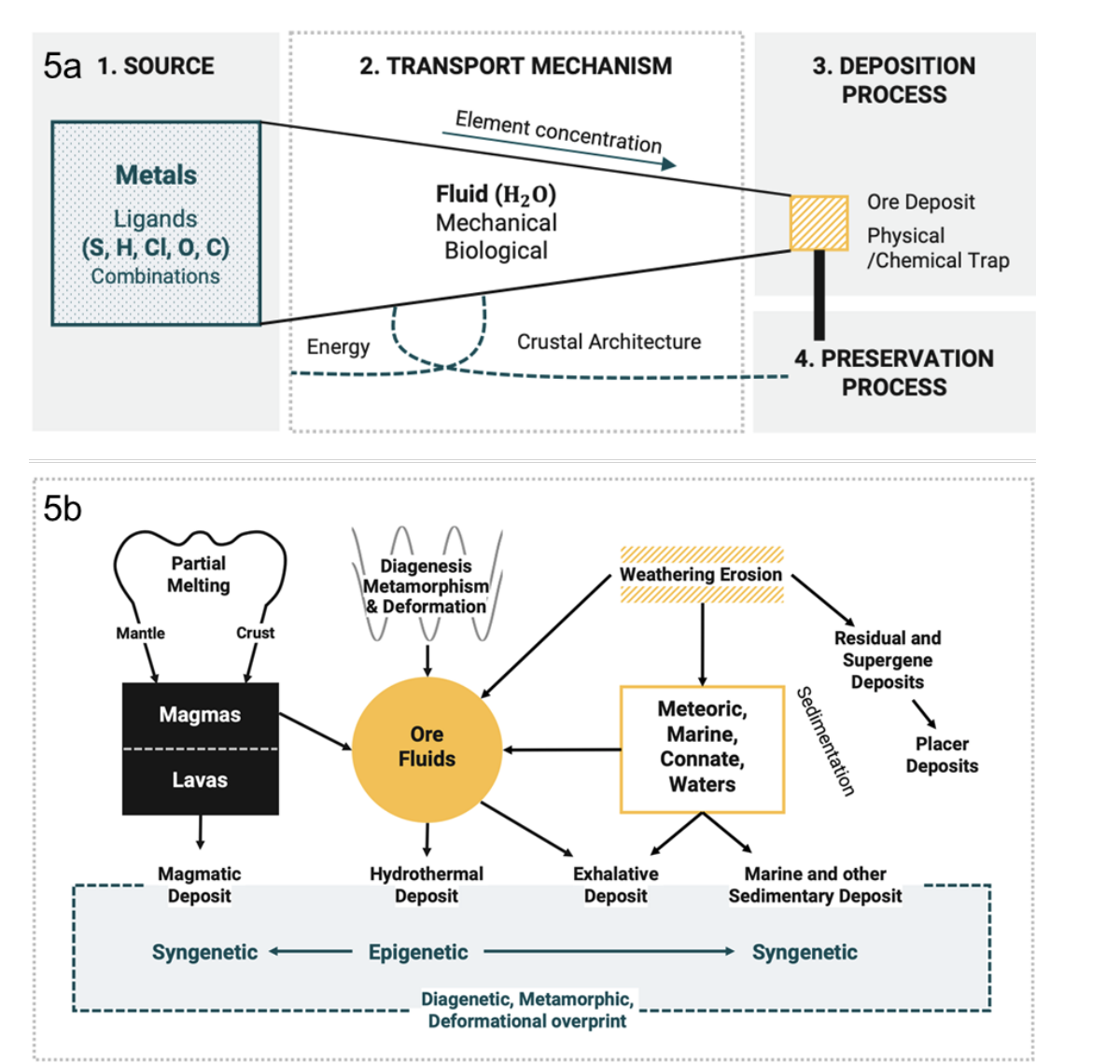

The patterns detected by convolutional networks are not abstract statistical artifacts. Ore deposits form through sequential geological processes including metal sourcing, transport via hydrothermal fluids, depositional trapping, and long term preservation. Secondary physicochemical and structural overprinting modifies primary mineral distributions. These processes generate complex multivariate geochemical signatures.Deep learning models approximate these latent generative mechanisms indirectly. The network does not know hydrothermal physics explicitly, yet it infers conditional relationships between element assemblages and economic grade because those assemblages encode process history. In statistical terms, the model approximates a high dimensional mapping from observed features to economic outcome that reflects unobserved geological drivers. This perspective reframes deep learning as process inference rather than black box automation. The network is estimating a function that approximates the conditional expectation of grade given multivariate geological evidence. When properly regularized and validated, the learned mapping generalizes to unseen spatial regions, revealing block clusters consistent with overprinting geological processes that classical interpolation fails to capture.

Geological systems are inherently nonstationary. Spatial continuity varies across structural domains. Sampling density changes with production stage. Assay bias differs between laboratory methods. These factors introduce distributional shift between training and prediction regimes.Statistical learning theory emphasizes that model reliability depends on the stability of the underlying data distribution. When feature distributions shift, generalization error increases. Hybrid modeling mitigates this risk by anchoring predictions partly in domain constrained kriging surfaces while leveraging neural networks to detect nonlinear deviations.This has broader implications. Climate volatility, regulatory change, and supply chain fragmentation introduce analogous nonstationarity in real asset systems. Geological modeling under distributional shift provides a technical analogue for capital allocation under macroeconomic instability.

The deeper implication of machine learning in resource modeling lies in uncertainty compression. In early stage mineral projects, grade distribution is uncertain. Resource classifications such as inferred, indicated, and measured correspond to confidence intervals over block grade distributions. Financing terms, cost of capital, and investor appetite depend on these confidence levels.Deep learning that improves recall without degrading precision effectively increases posterior confidence in economic blocks. From a Bayesian perspective, new model evidence updates prior beliefs about ore continuity and grade distribution. As posterior variance contracts, financing risk premium declines. Capital sequencing can therefore be framed as a sequential decision process. Initial exploration produces high variance posterior distributions. Advanced modeling reduces uncertainty. Investment decisions correspond to thresholds on expected value under updated distributions. This logic mirrors dynamic programming frameworks in which present actions influence future state distributions. A capital allocator fluent in statistical learning recognizes that machine learning is not merely operational optimization. It changes the timing of capital deployment. Earlier uncertainty compression allows rational engagement sooner in the asset lifecycle while preserving risk discipline.

Real asset capital formation historically separates geology, engineering, and finance into vertical silos. Deep learning integration collapses these silos into a unified probabilistic framework. Geological inference becomes statistical learning. Model calibration becomes risk pricing. Precision recall tradeoffs become economic optimization under asymmetric loss.The strategic advantage emerges not from building proprietary neural architectures but from understanding how hypothesis classes approximate unknown functions, how regularization governs variance, how ensemble methods reduce error correlation, and how posterior updating reshapes financing thresholds. In this view, artificial intelligence is not a technological overlay. It is a formal system for estimating conditional structure under incomplete information. Its relevance to mining, agriculture, energy infrastructure, and logistics lies in its ability to tighten probability distributions around economic outcomes.

Deep learning applied to mineral resource estimation demonstrates that nonlinear multivariate pattern recognition materially improves grade prediction in complex ore systems. Hybrid ensembles combining geostatistics and neural networks provide superior performance by balancing structural domain knowledge with latent pattern detection. These models approximate geological process signatures through empirical risk minimization and controlled capacity expansion. The broader implication extends beyond resource modeling. Machine learning is fundamentally a mechanism for uncertainty compression. In real asset capital formation, compressed uncertainty alters posterior distributions, reduces variance, and shifts optimal capital timing. The allocator who understands the mechanics of inference beneath the model gains structural leverage. Capital decisions become formal exercises in probabilistic updating rather than narrative speculation. Deep learning therefore represents not automation of geology but formalization of inference. When interpreted through statistical learning theory, it becomes a tool for aligning technical pattern recognition with disciplined capital deployment in environments defined by complexity and nonstationarity.